George Kiagiadakis

November 17, 2017

Reading time:

Earlier this year I worked on a certain GStreamer plugin that is called “ipcpipeline”. This plugin provides elements that make it possible to interconnect GStreamer pipelines that run in different processes. In this blog post I am going to explain how this plugin works and the reason why you might want to use it in your application.

In GStreamer, pipelines are meant to be built and run inside a single process. Normally one wouldn’t even think about involving multiple processes for a single pipeline. You can (and should) involve multiple threads, of course, which is easily done using the queue element, in order to do parallel processing. But since you can involve multiple threads, why would you want to involve multiple processes as well?

Splitting part of a pipeline to a different process is useful when there is one or more elements that need to be isolated for security reasons. Imagine the case where you have an application that uses a hardware video decoder and therefore has device access privileges. Also imagine that in the same pipeline you have elements that download and parse video content directly from a network server, like most Video On Demand applications would do. Although I don’t mean to say that GStreamer is not secure, it can be a good idea to think ahead and make it as hard as possible for an attacker to take advantage of potential security flaws. In theory, maybe someone could exploit a bug in the container parser by sending it crafted data from a fake server and then take control of other things by exploiting those device access privileges, or cause a system crash. ipcpipeline could help to prevent that.

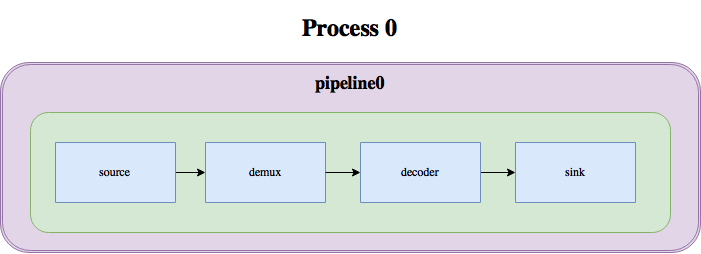

In the – oversimplified – diagram below we can see how the media pipeline in a video player would look like with GStreamer:

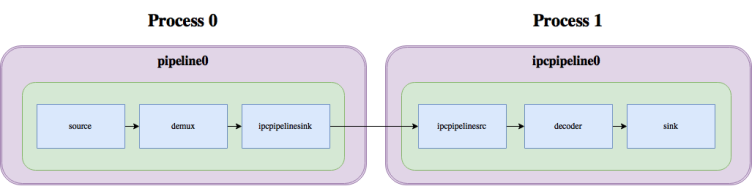

With ipcpipeline, this pipeline can be split into two processes, like this:

As you can see, the split mainly involves 2 elements: ipcpipelinesink, which serves as the sink for the first pipeline, and ipcpipelinesrc, which serves as the source for the second pipeline. These two elements internally talk to each other through a unix pipe or socket, transferring buffers, events, queries and messages over this socket, thus linking the two pipelines together.

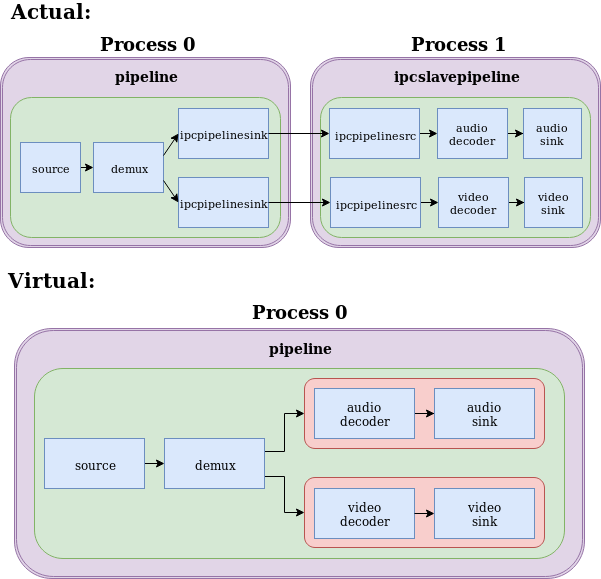

This mechanism doesn’t look very special, though. You might be wondering at this point, what is the difference between using ipcpipeline and some other existing mechanism like a pair of fdsink/fdsrc or udpsink/udpsrc or RTP? What is special about these elements is that the two pipelines behave as if they were a single pipeline, with the elements of the second one being part of a GstBin in the first one:

The diagram above illustrates how you can think of a pipeline that uses the ipcpipeline mechanism. As you can see, ipcpipelinesink behaves as a GstBin that contains the whole remote pipeline. This practically means that whenever you change the state of ipcpipelinesink, the remote pipeline’s state changes as well. It also means that all messages, events and queries that make sense are forwarded from one pipeline to the other, trying to implement as closely as possible the behavior that a GstBin would have.

This design practically allows you to modify an existing application to use this split-pipeline mechanism without having to change the pipeline control logic or implement your own IPC for controlling the second pipeline. It is all integrated in the mechanism already.

ipcpipeline follows a master-slave design. The pipeline that controls the state changes of the other pipeline is called the “master”, while the other one is called the “slave”. In the above example, the pipeline that contains the ipcpipelinesink element is the “master”, while the other one is the “slave”. At the moment of writing, the opposite setup is not implemented, so it’s always the downstream part of the pipeline that can be slaved and ipcpipelinesink is always the “master”.

While it is possible to have only one “master” pipeline, it is possible to have multiple “slave” ones. This allows, for example, to split an audio decoder and a video decoder into different processes:

It is also possible to have multiple ipcpipelinesink elements connect to the same slave pipeline. In this case, the slave pipeline will follow the state that is closest to PLAYING between the two states that it will get from the two ipcpipelinesinks. Also, messages from the slave pipeline will only be forwarded through one of the two ipcpipelinesinks, so you will not notice any duplicate messages. Behavior should be exactly the same as in the split slaves scenario.

ipcpipeline is part of the GStreamer bad plugins set (here). Documentation is included with the code and there are also some examples that you can try out to get familiar with it. Happy hacking!

07/05/2026

A complete breakdown of Mesa’s NIR compiler detailing how it optimizes shader memory access with SSA promotion, deref analysis, copy propagation,…

05/05/2026

Collabora brought Bluetooth Auracast broadcasting to MediaTek Genio 700 for Embedded World 2026. Here's the complete, fully Open Source…

22/04/2026

Using our XR expertise, Collabora created a standalone XR experience for our 1% for the Planet partner, SOMAR, to showcase the direct impact…

17/04/2026

BitNet-style ternary brings LLM inference to ExecuTorch via its Vulkan backend, enabling much smaller, bandwidth-efficient models with portable…

23/03/2026

PanVK’s new framebuffer abstraction for Mali GPUs removes OpenGL-specific constraints, unlocking more flexible tiled rendering features…

02/03/2026

Get the recap of Nicolas Frattaroli's FOSDEM talk detailing Rockchip’s mainline progress, including Vulkan 1.4 and NPU support as a vital…